Solving LP Relaxations of Some NP-Hard Problems Is As Hard As Solving Any Linear Program

We show that solving linear programming (LP) relaxations of many classical NP-hard combinatorial optimization problems (set cover/packing, facility location, maximum satisfiability, maximum independent set, multiway cut, three-dimensional matching, and constraint satisfaction) is as hard as solving the general LP problem. Precisely, the general LP can be reduced in nearly linear time to the LP relaxation of each of these problems. This result poses a limitation on designing algorithms to compute exact or even approximate solutions to the LP relaxations, as any lower bound on the complexity of solving the general LP problem is inherited by the LP relaxations.

D. Prusa, T. Werner (2019), Solving LP Relaxations of Some NP-Hard Problems Is As Hard As Solving Any Linear Program, SIAM Journal on Optimization. (PDF)

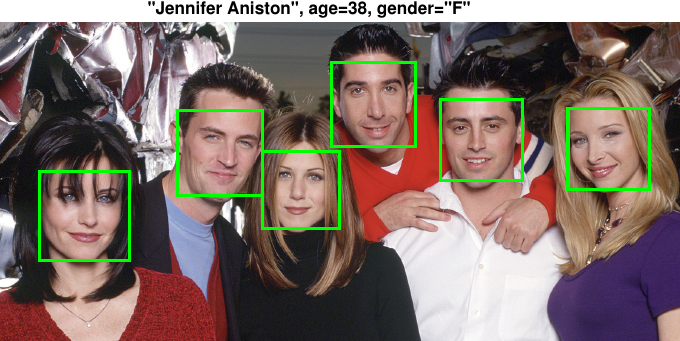

Learning CNNs from weakly annotated images

We show how to learn CNNs for face recognition using weakly annotated images where the annotation is assigned to a set of candidate faces rather than a single face like in the standard supervised setting. We use our method to create a database containing more than 300k faces of celebrities each annotated with his/her biological age, gender and identity.

V. Franc, J. Cech (2018), Learning CNNs from Weakly Annotated Facial Images, Image and Vision Computing. (PDF, BibTex )

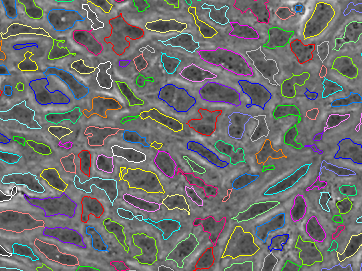

Probabilistic model for joint segmentation, detection and tracking

We have developed a novel method for joint segmentation, detection and tracking of multiple objects. The method is based on a probabilistic model that is defined implicitly in terms of a Markov chain Monte Carlo algorithm. The parameters of the model are learned using an objective based on empirical risk minimization. Our method was used by researchers from the Cells-in-Motion (CiM) Cluster of Excellence at the University of Münster for analysing the molecular mechanisms of motion and contact dynamics of endothelial cells when they form new blood vessels (full story). This work led to a joint publication in Nature Communications.

Jiahui Cao et al. (2017), Polarized actin and VE-cadherin dynamics regulate junctional remodelling and cell migration during sprouting angiogenesis, Nature Communications 8(1), (paper)

License Plate recognition and Super-resolution from Low-Resolution Videos We developed CNN architecture recognizing license plates from a sequence of low-resolution videos. Our system works reliably on videos which are unreadable by humans. We also show how to a generate super-resolution LP images from low-res videos.

V.Vasek, V. Franc, M. Urban (2018), License Plate Recognition and Super-resolution from Low-Resolution Videos by Convolutional Neural Networks, Proc. of British Machine Vision Conference. (PDF, BibTex)

Probabilistic deep networks

Deep networks with stochastic neurons as well as probabilistic generative models like Variational Autoencoders become increasingly important in Deep Learning due to the growing understanding of the importance of versatile and expressive learned (latent) representations. Our research in this area focusses on advanced learning algorithms as well as onconceptually novel approaches for semi-supervised learning for such models. read more

Two-dimensional automata and grammars

The theory of two-dimensional (2D) languages studies automata and grammars for processing 2D arrays of symbols. In our research we aim to examine such 2D models that have applications in the field of structural pattern recognition and 2D pattern matching. We have shown that variants of 2D grammars can be applied for recognition of domains such as mathematical formulas (paper), flowcharts (paper) or document layouts (paper). We have also recently described complexity of pattern matching againsts 2D languages accepted by various models of 2D automata (paper).

The Many Facets of Orthomodularity

Czech Science Foundation grant 20-09869L, 2020-22

Quantum physics requires a non-standard model of probability, admitting the description of events which are observable separately, but not simultaneously. Thus all observable events do not form a sigma-algebra, but a more general structure, typically an orthomodular lattice or poset. This makes the development of probability theory more difficult; still many results were proved under the weaker assumptions. Among them, the proof (by Bell, Kochen, Specker, and others) of the impossibility of describing quantum theory in the classical terms using so-called “hidden variables”, wrongly predicted by Albert Einstein. We contributed to improvements of this result. Further research clarifies the algebraic properties of quantum logics.

Voráček, V., Navara, M.: Generalised Kochen–Specker Theorem in three dimensions. Foundations of Physics 51 (2021), 67. DOI 10.1007/s10701-021-00476-3

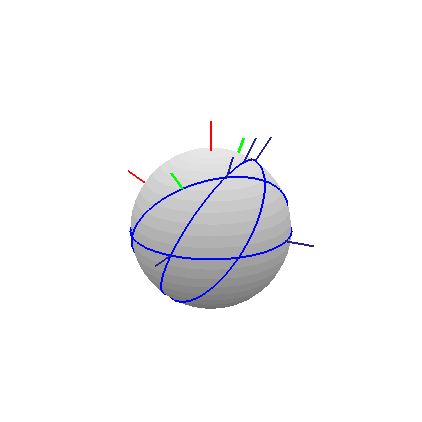

Figure: A graphical representation of the proof of Bell’s Theorem.